Large-Scale Information Extraction under Privacy-Aware Constraints

Tutorial Website https://www.microsoft.com/en-us/research/project/cikm-2021-tutorial-on-large-scale-information-extraction-with-privacy-aware-constraints/

Tutorial Description

In this digital age, people spend a significant portion of their lives online and this has led to an explosion of personal data from users and their activities. Typically, this data is private and nobody else, except the user, is allowed to look at it. This poses interesting and complex challenges from scalable information extraction point of view: extracting information under privacy aware constraints where there is little data to learn from but need highly accurate models to run on large amount of data across different users. Anonymization of data is typically used to convert private data into publicly accessible data. But this may not always be feasible and may require complex differential privacy guarantees in order to be safe from any potential negative consequences. Other techniques involve building models on a small amount of seen (eyes-on) data and a large amount of unseen (eyes-off) data. In this tutorial, we use emails as representative private data to explain the concepts of scalable IE under privacy-aware constraints.

To extract information from machine generated emails (flight confirmations, hotel reservations, package delivery, etc.), one needs to classify the emails, cluster them into possible templates, build models to extract information from them, and monitor the models to maintain a high precision and recall. How are the IE techniques for private eyes-off data different compared to that for eyes-on HTML data? How to get labeled data in a privacy preserving manner? What are the different techniques for generating semi-labeled data and learning from them? How to build scalable extraction models across a number of sender domains using different ways to represent the information? How to monitor these models with minimum human intervention? In this tutorial we address all these questions from various research to production perspectives.

Tutorial Organisers

-

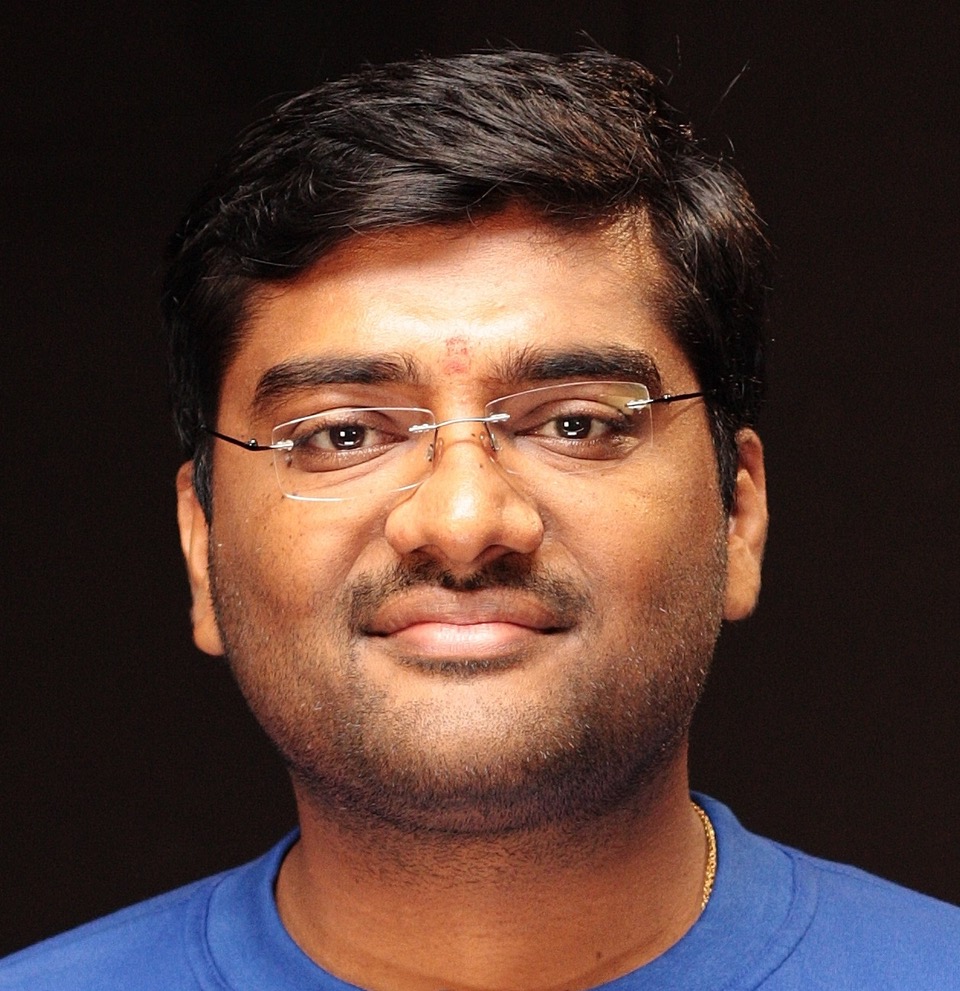

Rajeev GuptaMicrosoftRajeev Gupta is a Principal Applied Researcher at Microsoft Search Assistant & Intelligence (MSAI), India. He got his PhD from Indian Institute of Technology (IIT) Mumbai (Bombay) in Computer Science. He has more than 30 publications and 20 patents in the areas of data management, information extraction, and distributed computing in reputed conferences and journals such as TKDE, ICDE, VLDB, WWW, SIGMETRICS, CIKM, KDD, etc. He is currently working in applying AI for information extraction and mining enabling intelligence in Microsoft office for more than four years.

Rajeev GuptaMicrosoftRajeev Gupta is a Principal Applied Researcher at Microsoft Search Assistant & Intelligence (MSAI), India. He got his PhD from Indian Institute of Technology (IIT) Mumbai (Bombay) in Computer Science. He has more than 30 publications and 20 patents in the areas of data management, information extraction, and distributed computing in reputed conferences and journals such as TKDE, ICDE, VLDB, WWW, SIGMETRICS, CIKM, KDD, etc. He is currently working in applying AI for information extraction and mining enabling intelligence in Microsoft office for more than four years. -

Ranganath KondapallyMicrosoftRanganath Kondapally is a is a Principal Applied Researcher at Microsoft Search Assistant & Intelligence (MSAI), India. He got his PhD in Computer Science from Dartmouth College in the area of computational complexity and streaming algorithms. His areas of interest include information extraction, machine learning algorithms, and complexity theory. He has numerous publications and patents in his name in the areas of information extraction, streaming algorithms, and virtual reality. Currently, he is working on information extraction and inferencing problems on bigdata, powering delightful personal assistant experiences.

Ranganath KondapallyMicrosoftRanganath Kondapally is a is a Principal Applied Researcher at Microsoft Search Assistant & Intelligence (MSAI), India. He got his PhD in Computer Science from Dartmouth College in the area of computational complexity and streaming algorithms. His areas of interest include information extraction, machine learning algorithms, and complexity theory. He has numerous publications and patents in his name in the areas of information extraction, streaming algorithms, and virtual reality. Currently, he is working on information extraction and inferencing problems on bigdata, powering delightful personal assistant experiences.

Tutorial Abstract

In this digital age, people spend a significant portion of their lives online and this has led to an explosion of personal data from users and their activities. Typically, this data is private and nobody else, except the user, is allowed to look at it. This poses interesting and complex challenges from scalable information extraction point of view: extracting information under privacy aware constraints where there is little data to learn from but need highly accurate models to run on large amount of data across different users. Anonymization of data is typically used to convert private data into publicly accessible data. But this may not always be feasible and may require complex differential privacy guarantees in order to be safe from any potential negative consequences. Other techniques involve building models on a small amount of seen (eyes-on) data and a large amount of unseen (eyes-off) data. In this tutorial, we use emails as representative private data to explain the concepts of scalable IE under privacy-aware constraints.